Docker for local web development, part 1: a basic LEMP stack

Last updated: 2023-04-17 :: Published: 2020-03-04 :: [ history ]You can also subscribe to the RSS or Atom feed, or follow me on Twitter.

In this series

- Introduction: why should you care?

- Part 1: a basic LEMP stack ⬅️ you are here

- Part 2: put your images on a diet

- Part 3: a three-tier architecture with frameworks

- Part 4: smoothing things out with Bash

- Part 5: HTTPS all the things

- Part 6: expose a local container to the Internet

- Part 7: using a multi-stage build to introduce a worker

- Part 8: scheduled tasks

- Conclusion: where to go from here

Subscribe to email alerts at the end of this article or follow me on Twitter to be informed of new publications.

In this post

The first steps

I trust you've already read the introduction to this series and are now ready for some action.

The first thing to do is to head over to the Docker website and download and install Docker Desktop for Mac or PC, or head over here for installation instructions on various Linux distributions. If you're on Windows, make sure to install Windows Subsystem for Linux (WSL 2), and to configure Docker Desktop to use it.

The second thing you will need is a terminal.

Once both requirements are covered, you can either get the final result from the repository and follow this tutorial, or start from scratch and compare your code to the repository's whenever you get stuck. The latter is my recommended approach for Docker beginners, as the various concepts are more likely to stick if you write the code yourself.

Note that this post is quite dense because of the large number of notions being introduced. I assume no prior knowledge of Docker and I try not to leave any detail unexplained. If you are a complete beginner, make sure you have some time ahead of you and grab yourself a hot drink: we're taking the scenic route.

Identifying the necessary containers

Docker recommends running only one process per container, which roughly means that each container should be running a single piece of software. Let's remind ourselves what the programs underlying the LEMP stack are:

- L is for Linux;

- E is for Nginx;

- M is for MySQL;

- P is for PHP.

Linux is the operating system Docker runs on, so that leaves us with Nginx, MySQL and PHP. For convenience, we will also add phpMyAdmin into the mix. As a result, we now need the following containers:

- one container for Nginx;

- one container for PHP (PHP-FPM);

- one container for MySQL;

- one container for phpMyAdmin.

This is fairly straightforward, but how do we get from here to setting up these containers, and how will they interact with each other?

Docker Compose

Docker Desktop comes with a tool called Docker Compose that allows you to define and run multi-container Docker applications (if your system runs on Linux, you will need to install it separately).

Docker Compose isn't absolutely necessary to manage multiple containers, as doing so can be achieved with Docker alone, but in practice it is very inconvenient to do so (it would be similar to doing long division while there is a calculator on the desk: while it is certainly not a bad skill to have, it is also a tremendous waste of time).

The containers are described in a YAML configuration file and Docker Compose will take care of building the images and starting the containers, as well as some other useful things like automatically connecting the containers to an internal network.

Don't worry if you feel a little confused; by the end of this post it will all make sense.

Nginx

The YAML configuration file will actually be our starting point: open your favourite text editor and add a new docker-compose.yml file to a directory of your choice on your local machine (your computer), with the following content:

1 2 3 4 5 6 7 8 9 10 | version: '3.8'

# Services

services:

# Nginx Service

nginx:

image: nginx:1.21

ports:

- 80:80

|

The version key at the top of the file indicates the version of Docker Compose we intend to use (3.8 is the latest version at the time of writing).

It is followed by the services key, which is a list of the application's components. For the moment we only have the nginx service, with a couple of keys: image and ports. The former indicates which image to use to build our service's container; in our case, version 1.21 of the Nginx image. Open the link in a new tab: it will take you to Docker Hub, which is the largest registry for container images (think of it as the Packagist or PyPI of Docker).

Why not use the latest tag? You will probably notice that all images have a latest tag corresponding to the most up-to-date version of the image. While it might be tempting to use it, you don't know how the image will evolve in the future – it is very likely that breaking changes will be introduced sooner or later. The same way you do a version freeze for an application's dependencies (via composer.lock for PHP or requirements.txt in Python, for example), using a specific version tag ensures your Docker setup won't break due to unforeseen changes.

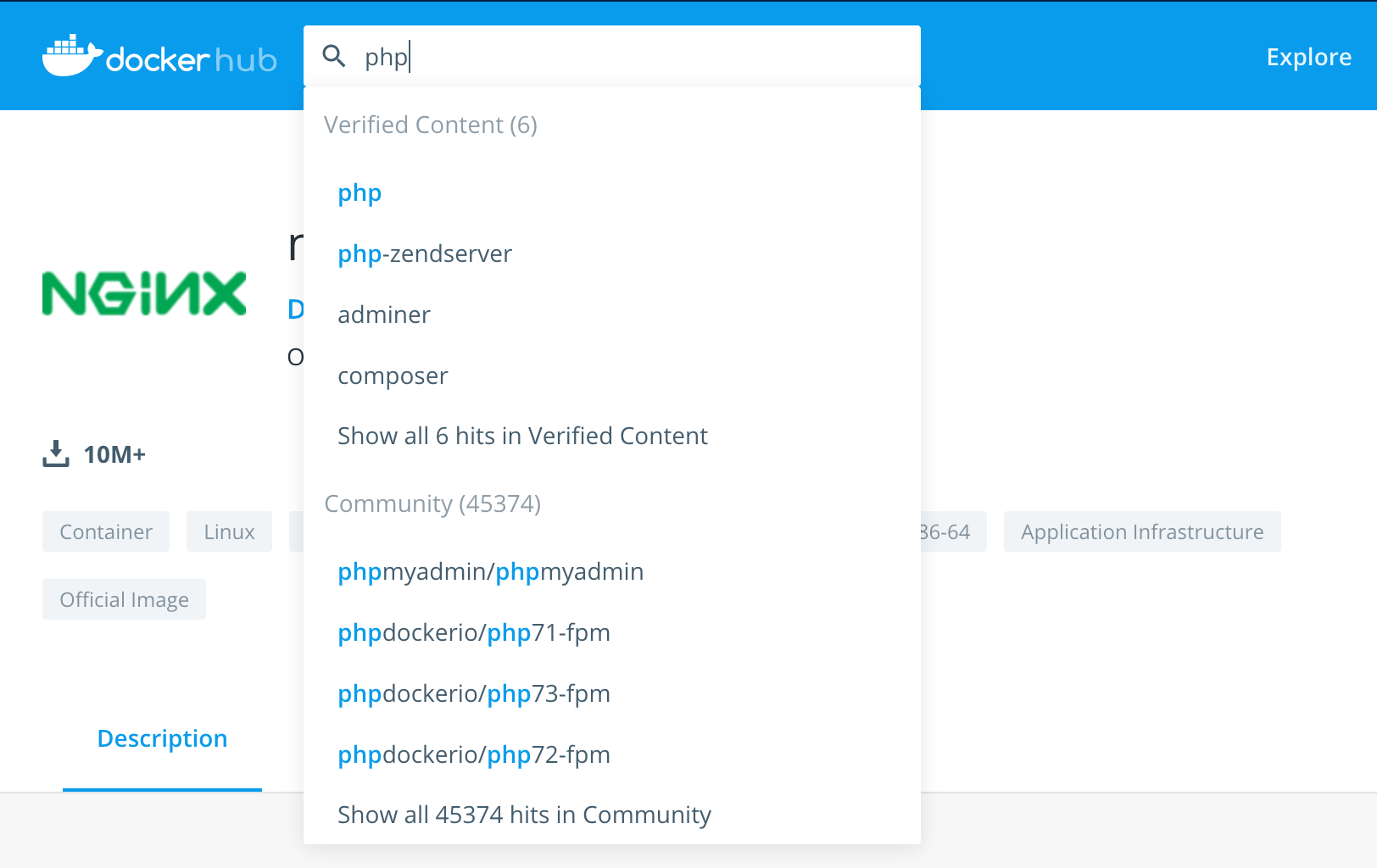

Much like a Github repository, image descriptions on Docker Hub usually do a good job at explaining how to use it and what the available versions are. Here, we are looking at Nginx's official image: Docker keeps a curated list of "official" images (sometimes maintained by upstream developers, but not always), which I always use whenever possible. They are easily recognisable: their page mentions Docker Official Images at the top, and Docker Hub separates them clearly from the community images when doing a search:

Note the "Verified Content" at the top

Back to docker-compose.yml: under ports, 80:80 indicates that we want to map our local machine's port 80 (used by HTTP) to the container's. In other words, when we will access port 80 on our local machine (i.e. your computer), we will be forwarded to the port 80 of the Nginx container.

Let's test this out. Save the docker-compose.yml file, open a terminal and change the current directory to your project's before running the following command:

$ docker compose up -d

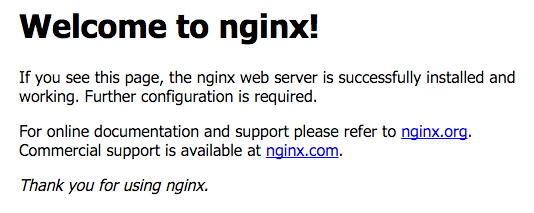

It might take a little while as the Nginx image will first be downloaded from Docker Hub. When it is done, open localhost in your browser, which should display Nginx's welcome page:

Congratulations! You have just created your first Docker container.

Let's break down that command: by running docker compose up -d, we essentially asked Docker Compose to build and start the containers described in docker-compose.yml; the -d option indicates that we want to run the containers in the background and get our terminal back.

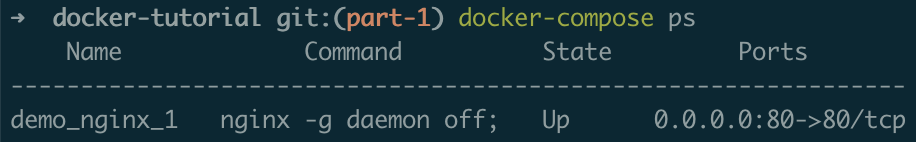

You can see which containers are currently running by executing the following command:

$ docker compose ps

Which should display something similar to this:

To stop the containers, simply run:

$ docker compose stop

At this point, you might be wondering what the difference is between a service, an image and a container. A service is just one of your application's components, as listed in docker-compose.yml. Each service refers to an image, which is used to start and stop containers based on this image.

To help you grasp the nuance, think of an image as a class, and of a container as an instance of that class.

Speaking of OOP, how about we set up PHP?

PHP

By the end of this section, we will have Nginx serving a simple index.php file via PHP-FPM, which is the most widely used process manager for PHP.

Not a PHP fan? As mentioned in the introduction, while PHP is used on the server side throughout this series, swapping it for another language should be fairly straightforward.

Replace the content of docker-compose.yml with this one:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 | version: '3.8'

# Services

services:

# Nginx Service

nginx:

image: nginx:1.21

ports:

- 80:80

volumes:

- ./src:/var/www/php

- ./.docker/nginx/conf.d:/etc/nginx/conf.d

depends_on:

- php

# PHP Service

php:

image: php:8.1-fpm

working_dir: /var/www/php

volumes:

- ./src:/var/www/php

|

A few things going on here: let's forget about the Nginx service for a moment, and focus on the new PHP service instead. We start from the php:8.1-fpm image, corresponding to the tag 8.1-fpm of PHP's official image, featuring version 8.1 and PHP-FPM. Let's skip working_dir for now, and have a look at volumes. This section allows us to define volumes (basically, directories or single files) that we want to mount onto the container. This essentially means we can map local directories and files to directories and files on the container; in our case, we want Docker Compose to mount the src folder as the container's /var/www/php folder.

What's in the src/ folder? Nothing yet, but that's where we are going to place our application code. Once it is mounted onto the container, any change we make to our code will be immediately available, without the need to restart the container.

Create the src directory (at the same level as docker-compose.yml) and add the following index.php file to it:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 | <!DOCTYPE html>

<html>

<head>

<meta charset="UTF-8">

<title>Hello there</title>

<style>

.center {

display: block;

margin-left: auto;

margin-right: auto;

width: 50%;

}

</style>

</head>

<body>

<img src="https://tech.osteel.me/images/2020/03/04/hello.gif" alt="Hello there" class="center">

</body>

</html>

|

It only contains a little bit of HTML and CSS, but all we need for now is to make sure PHP files are correctly served.

Back to the Nginx service: we added a volumes section to it as well, where we mount the directory containing our code just like we did for the PHP service (this is so Nginx gets a copy of index.php, without which it would return a 404 Not Found when trying to access the file), and this time we also want to import the Nginx server configuration that will point to our application code:

- ./.docker/nginx/conf.d:/etc/nginx/conf.d

As Nginx automatically reads files ending with .conf located in the /etc/nginx/conf.d directory, by mounting our own local conf.d directory in its place we make sure the configuration files it contains will be processed by Nginx on the container.

Create the .docker/nginx/conf.d folder and add the following php.conf file to it:

1 2 3 4 5 6 7 8 9 10 11 12 13 | server {

listen 80 default_server;

listen [::]:80 default_server;

root /var/www/php;

index index.php;

location ~* \.php$ {

fastcgi_pass php:9000;

include fastcgi_params;

fastcgi_param SCRIPT_FILENAME $document_root$fastcgi_script_name;

fastcgi_param SCRIPT_NAME $fastcgi_script_name;

}

}

|

Note Placing Docker-related files under a .docker folder is a common practice.

This is a minimalist PHP-FPM server configuration borrowed from Linode's website that doesn't have much to it; simply notice we point the root to /var/www/php, which is the directory onto which we mount our application code in both our Nginx and PHP containers, and that we set the index to index.php.

The following line is also interesting:

fastcgi_pass php:9000;

It tells Nginx to forward requests for PHP files to the PHP container's port 9000, which is the default port PHP-FPM listens on. Internally, Docker Compose will automatically resolve the php keyword to whatever private IP address it assigned to the PHP container.

This is another great feature of Docker Compose: at start-up, it will automatically set up an internal network on which each container is discoverable via its service's name.

A word on networks Docker Compose sets up a network with the bridge driver by default, but you can also specify the networks. I've personally never used any other network than the default one, but you can read about other options here.

Finally, let's have a look at the last configuration section of the Nginx service:

1 2 | depends_on:

- php

|

Sometimes, the order in which Docker Compose starts the containers matters. As we want Nginx to forward PHP requests to the PHP container's port 9000, the following error might occur if Nginx happens to be ready before PHP:

[emerg] 1#1: host not found in upstream "php" in /etc/nginx/conf.d/php.conf:7

nginx_1 | nginx: [emerg] host not found in upstream "php" in /etc/nginx/conf.d/php.conf:7

nginx_1 exited with code 1

This causes the Nginx process to stop, and as the Nginx container will only run for as long as the Nginx process is up, the container stops as well. The depends_on configuration ensures the PHP container will start before the Nginx one, saving us an embarrassing situation.

Your directory and file structure should now look similar to this:

docker-tutorial/

├── .docker/

│ └── nginx/

│ └── conf.d/

│ └── php.conf

├── src/

│ └── index.php

└── docker-compose.yml

We are ready for another test. Go back to your terminal and run the same command again (this time, the PHP image will be downloaded):

$ docker compose up -d

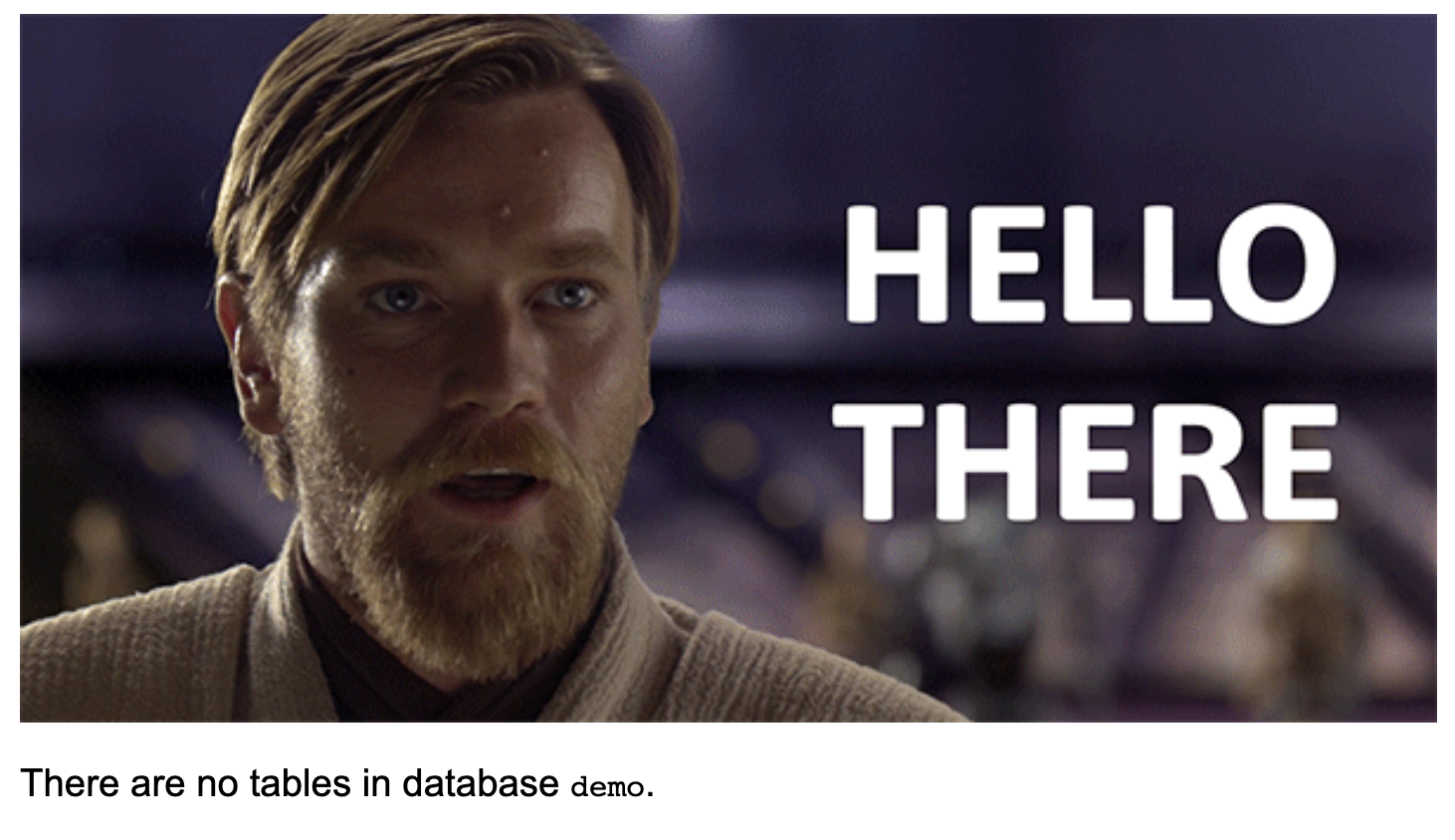

Refresh localhost: if everything went well you will be greeted by the man who can disappear in a bathrobe.

Update index.php (modify the content of the <title> tag, for instance) and reload the page: the change should appear immediately.

If you run docker compose ps you will observe that you now have two containers running: nginx_1 and php_1.

Let's inspect the PHP container:

$ docker compose exec php bash

By running this command, we ask Docker Compose to execute Bash on the PHP container. You should get a new prompt indicating that you are currently under /var/www/php: this is what the working_directory configuration we ran into earlier is for. Run a simple ls to list the content of the directory: you should see index.php, which is expected as we mounted our local src folder onto the container's /var/www/php folder.

Run exit to leave the container.

Before we move on to the next section, let me show you one last trick. Go back to your terminal and run the following command:

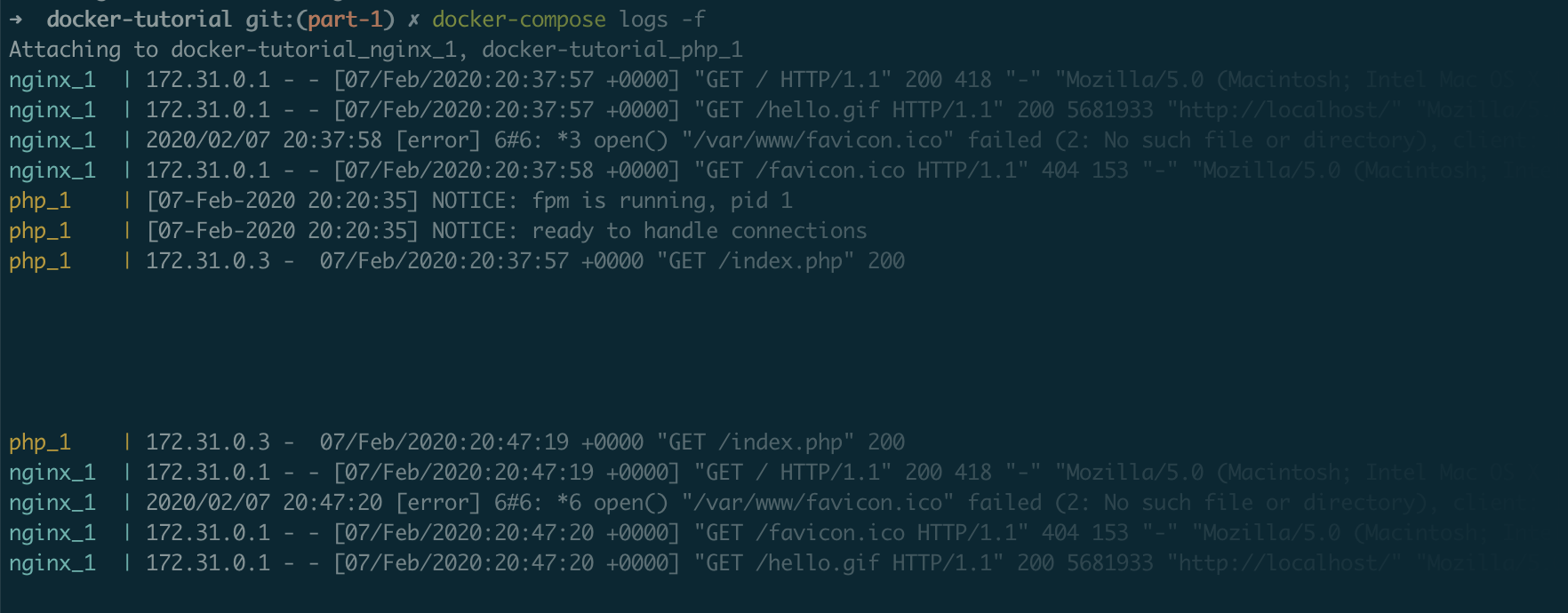

$ docker compose logs -f

Wait for a few logs to display, and hit the return key a few times to add some empty lines. Refresh localhost again and take another look at your terminal, which should have printed some new lines:

This command aggregates the logs of every container, which is extremely useful for debugging: if anything goes wrong, your first reflex should always be to look at the logs. It is also possible to display the information of a specific container simply by appending the name of the service (e.g. docker compose logs -f nginx).

Hit ctrl+c to get your terminal back.

MySQL

The last key component of our LEMP stack is MySQL. Let's update docker-compose.yml again:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 | version: '3.8'

# Services

services:

# Nginx Service

nginx:

image: nginx:1.21

ports:

- 80:80

volumes:

- ./src:/var/www/php

- ./.docker/nginx/conf.d:/etc/nginx/conf.d

depends_on:

- php

# PHP Service

php:

build: ./.docker/php

working_dir: /var/www/php

volumes:

- ./src:/var/www/php

depends_on:

mysql:

condition: service_healthy

# MySQL Service

mysql:

image: mysql/mysql-server:8.0

environment:

MYSQL_ROOT_PASSWORD: root

MYSQL_ROOT_HOST: "%"

MYSQL_DATABASE: demo

volumes:

- ./.docker/mysql/my.cnf:/etc/mysql/my.cnf

- mysqldata:/var/lib/mysql

healthcheck:

test: mysqladmin ping -h 127.0.0.1 -u root --password=$$MYSQL_ROOT_PASSWORD

interval: 5s

retries: 10

# Volumes

volumes:

mysqldata:

|

The Nginx service is still the same, but the PHP one was slightly updated. We are already familiar with depends_on: this time, we indicate that the new MySQL service should be started before PHP. The other difference is the presence of the condition option; but before I explain it all, let's take a look at the new build section of the PHP service, which seemingly replaced the image one. Instead of using the official PHP image as is, we tell Docker Compose to use the Dockerfile from .docker/php to build a new image.

A Dockerfile is like the recipe to build an image: every image has one, even official ones (see for instance Nginx's).

Create the .docker/php folder and add a file named Dockerfile to it, with the following content:

1 2 3 | FROM php:8.1-fpm

RUN docker-php-ext-install pdo_mysql

|

PHP needs the pdo_mysql extension in order to read from a MySQL database. Although it doesn't come with the official image, the Docker Hub description provides some instructions to install PHP extensions easily. At the top of our Dockerfile, we indicate that we start from the official image, and we proceed with installing pdo_mysql with a RUN command. And that's it! Next time we start our containers, Docker Compose will pick up the changes and build a new image based on the recipe we gave it.

A lot more can be done with a Dockerfile, and while this is a very basic example some more advanced use cases will be covered in subsequent articles.

For the time being, let's update index.php to leverage the new extension:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 | <!DOCTYPE html>

<html>

<head>

<meta charset="UTF-8">

<title>Hello there</title>

<style>

body {

font-family: "Arial", sans-serif;

font-size: larger;

}

.center {

display: block;

margin-left: auto;

margin-right: auto;

width: 50%;

}

</style>

</head>

<body>

<img src="https://tech.osteel.me/images/2020/03/04/hello.gif" alt="Hello there" class="center">

<?php

$connection = new PDO('mysql:host=mysql;dbname=demo;charset=utf8', 'root', 'root');

$query = $connection->query("SELECT TABLE_NAME FROM information_schema.TABLES WHERE TABLE_SCHEMA = 'demo'");

$tables = $query->fetchAll(PDO::FETCH_COLUMN);

if (empty($tables)) {

echo '<p class="center">There are no tables in database <code>demo</code>.</p>';

} else {

echo '<p class="center">Database <code>demo</code> contains the following tables:</p>';

echo '<ul class="center">';

foreach ($tables as $table) {

echo "<li>{$table}</li>";

}

echo '</ul>';

}

?>

</body>

</html>

|

The main change is the addition of a few lines of PHP code to connect to a database that does not exist yet.

Let's now have a closer look at the MySQL service in docker-compose.yml:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 | # MySQL Service

mysql:

image: mysql/mysql-server:8.0

environment:

MYSQL_ROOT_PASSWORD: root

MYSQL_ROOT_HOST: "%"

MYSQL_DATABASE: demo

volumes:

- ./.docker/mysql/my.cnf:/etc/mysql/my.cnf

- mysqldata:/var/lib/mysql

healthcheck:

test: mysqladmin ping -h 127.0.0.1 -u root --password=$$MYSQL_ROOT_PASSWORD

interval: 5s

retries: 10

|

The image section points to MySQL Server's image for version 8.0, and it is followed by a section we haven't come across yet: environment. It contains three keys – MYSQL_ROOT_PASSWORD, MYSQL_ROOT_HOST and MYSQL_DATABASE – which are environment variables that will be set on the container upon creation. They allow us to set the root password, authorise connections from any IP address, and create a default database respectively.

In other words, a demo database will automatically be created for us when the container starts.

Why MySQL Server? At the time of writing, containers based on MySQL's official image don't run on Macs with M1 chips. This is because the M1 is ARM-based and the official MySQL image isn't compatible with it, whereas the MySQL Server one is. Using the latter for our Docker-based environment will thus ensure maximum compatibility.

There are other specifities to be aware of when using Docker on M1 machines, some of which you can read about here.

After the environment key is the now familiar volumes. The first volume is a configuration file we will be using to set the character set to utf8mb4_unicode_ci by default, which is pretty standard nowadays.

Create the .docker/mysql folder and add the following my.cnf file to it:

1 2 3 | [mysqld]

collation-server = utf8mb4_unicode_ci

character-set-server = utf8mb4

|

Password plugin error? Some older versions of PHP are incompatible with MySQL's new default password plugin introduced with version 8. If you require such a version, you might also need to add the following line to the configuration file:

default-authentication-plugin = mysql_native_password

If the containers are already running, destroy them as well as the volumes with docker compose down -v and run docker compose up -d again.

The second volume looks a bit different than what we have seen so far: instead of pointing to a local folder, it refers to a named volume defined in a whole new volumes section which sits at the same level as services:

1 2 3 4 | # Volumes

volumes:

mysqldata:

|

We need such a volume because without it, every time the mysql service container is destroyed the database is destroyed with it. To make it persistent, we basically tell the MySQL container to use the mysqldata volume to store the data locally, local being the default driver (just like networks, volumes come with various drivers and options which you can learn about here). As a result, a local directory is mounted onto the container, the difference being that instead of specifying which one, we let Docker Compose pick a location.

The last section is a new one: healthcheck. It allows us to specify on which condition a container is ready, as opposed to just started. In this case, it is not enough to start the MySQL container – we also want to create the database before the PHP container tries to access it. In other words, without this heath check the PHP container might try to access the database even though it doesn't exist yet, causing connection errors.

This is what these lines in the PHP service description were about:

1 2 3 | depends_on:

mysql:

condition: service_healthy

|

By default, depends_on will just wait for the referenced containers to be started, unless we specify otherwise. This health check might not work on the first attempt, however; that's why we set it up to retry every 5 seconds up to 10 times, using the interval and retries keys respectively.

The health check itself uses mysqladmin, a MySQL server administration program, to ping the server until it gets a response. It does so using the root user and the value set in the MYSQL_ROOT_PASSWORD environment variable as the password (which also happens to be root in our case).

Go back to your terminal and run docker compose up -d again. Once it is done downloading the MySQL image and all of the containers are up and running, refresh localhost. You should see this:

We now have Nginx serving PHP files that can connect to a MySQL database, meaning our LEMP stack is pretty much complete. The next steps are about improving our setup, starting with seeing how we can interact with the database in a user-friendly way.

phpMyAdmin

When it comes to dealing with a MySQL database, phpMyAdmin remains a popular choice; conveniently, they provide a Docker image which is pretty straightforward to set up.

Not using phpMyAdmin? If you are used to some other tool like Sequel Ace or MySQL Workbench, you can simply update the MySQL configuration in docker-compose.yml and add a ports section mapping your local machine's port 3306 to the container's:

...

ports:

- 3306:3306

...

From there, all you need to do is configure a database connection in your software of choice, setting localhost:3306 as the host and root, root as login and password to access the MySQL database while the container is running.

If you choose to do the above, you can skip this section altogether and move on to the next one.

Open docker-compose.yml one last time and add the following service configuration after MySQL's:

1 2 3 4 5 6 7 8 9 10 | # PhpMyAdmin Service

phpmyadmin:

image: phpmyadmin/phpmyadmin:5

ports:

- 8080:80

environment:

PMA_HOST: mysql

depends_on:

mysql:

condition: service_healthy

|

We start from version 5 of the image and we map the local machine's port 8080 to the container's port 80. We indicate that the MySQL container should be started and ready first with depends_on, and set the host that phpMyAdmin should connect to using the PMA_HOST environment variable (remember that Docker Compose will automatically resolve mysql to the private IP address it assigned to the container).

Save the changes and run docker compose up -d again. The image will be downloaded, then, once everything is up, visit localhost:8080:

Enter root / root as username and password, create a couple of tables under the demo database and refresh localhost to confirm they are correctly listed.

And that's it! That one was easy, right?

Let's move on to setting up a proper domain name for our application.

Domain name

We have come a long way already and all that's left for today mostly boils down to polishing up our setup. While accessing localhost is functional, it is not particularly user friendly.

Replace the content of .docker/nginx/conf.d/php.conf with this one:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 | server {

listen 80;

listen [::]:80;

server_name php.test;

root /var/www/php;

index index.php;

location ~* \.php$ {

fastcgi_pass php:9000;

include fastcgi_params;

fastcgi_param SCRIPT_FILENAME $document_root$fastcgi_script_name;

fastcgi_param SCRIPT_NAME $fastcgi_script_name;

}

}

|

We essentially removed default_server (since the server will now be identified by a domain name) and added the server_name configuration, giving it the value php.test, which will be our application's address.

There is one extra step we need to take for this to work: as php.test is not a real domain name (it is not registered anywhere), you need to edit your local machine's hosts file so it recognises it.

Where to find the hosts file? On UNIX-based systems (essentially Linux distributions and macOS), it is located at /etc/hosts. On Windows, it should be located at c:\windows\system32\drivers\etc\hosts. You will need to edit it as administrator (this tutorial should help if you are unsure how to do that).

Add the following line to your hosts file and save it:

127.0.0.1 php.test

Since we haven't updated docker-compose.yml nor any Dockerfile, this time a simple docker compose up -d won't be enough for Docker Compose to pick up the changes. We need to explicitly tell it to restart the containers so the Nginx process is restarted and the new configuration is taken into account:

$ docker compose restart

Your application is now available at php.test, as well as localhost.

Environment variables

We are almost there, folks! The last thing I want to show you today is how to set environment variables for the whole Docker Compose project, rather than for a specific service like we have been doing so far (using the environment section in docker-compose.yml).

Before we do that, I would like you to list the current containers:

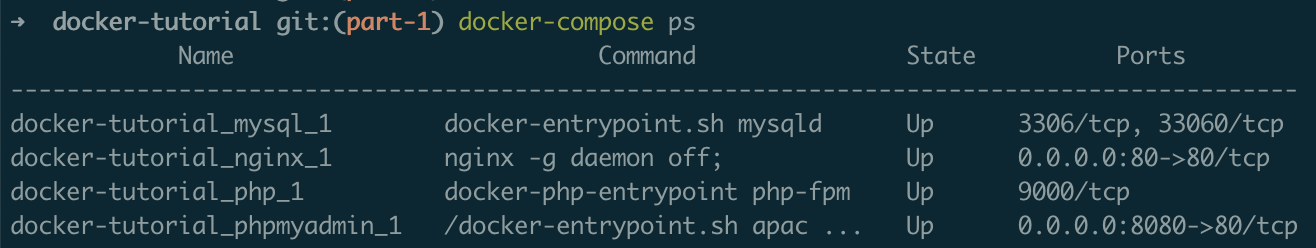

$ docker compose ps

Notice how each container is prefixed by the name of your project directory (which would be docker-tutorial if you cloned the repository):

Now, before we proceed further, let's destroy our containers and volumes so we can start afresh:

$ docker compose down -v

Create a .env file alongside docker-compose.yml, with the following content:

COMPOSE_PROJECT_NAME=demo

Save the file and run docker compose up -d again, followed by docker compose ps: each container is now prefixed with demo_.

Why is this important? By assigning a unique name to your project, you ensure that no name collision will happen with other ones. If there are multiple Docker-based projects on your system that share the same name or directory name, and more than one use a service called nginx, Docker may complain that another container named xxx_nginx already exists when you bring up a Docker environment.

While this might not seem essential, it is an easy way to avoid potential hassle in the future, and provides some consistency across the team. Speaking of which: if you've dealt with .env files before, you probably know that they are not supposed to be versioned and pushed to a code repository. Assuming you are using Git, you should add .env to a .gitignore file, and create a .env.example file that will be shared with your coworkers.

Here is what the final directory and file structure should look like:

docker-tutorial/

├── .docker/

│ ├── mysql/

│ │ └── my.cnf

│ ├── nginx/

│ │ └── conf.d/

│ │ └── php.conf

│ └── php/

│ └── Dockerfile

├── src/

│ └── index.php

├── .env

├── .env.example

├── .gitignore

└── docker-compose.yml

That is the extent to which we need environment variables for this article, but you can read more about them over here.

Commands summary and cleaning up your environment

Before we wrap up, I'd like to summarise all of the commands we have been using so far, and throw a few more in so you can clean up your environment if you wish to. This can be used as a reference you can easily come back to if need be, especially in the beginning.

Remember that they need to be run from your project's directory.

Start and run the containers in the background

$ docker compose up -d

If you update docker-compose.yml, an image or a Dockerfile, running this command again will pick up the changes automatically.

Restart the containers

$ docker compose restart

Useful when some changes require a process to restart, e.g. restart Nginx to pick up some server configuration changes.

List the containers

$ docker compose ps

Tail the containers' logs

$ docker compose logs [service]

Replace [service] with a service name (e.g. nginx) to display this service's logs only.

Stop the containers

$ docker compose stop

Stop and/or destroy the containers

$ docker compose down

Stop and/or destroy the containers and their volumes (including named volumes)

$ docker compose down -v

Delete everything, including images and orphan containers

$ docker compose down -v --rmi all --remove-orphans

Orphan containers are left behind containers that used to match a Docker Compose service but are now not connected to anything, which sometimes happens while you're building your Docker setup.

Conclusion

Here is a summary of what we have covered today:

- what Docker Compose is;

- what the difference between a service, an image and a container is;

- how to search for images on Docker Hub;

- what running a single process per container means;

- how to split our application into different containers accordingly;

- how to describe services in a

docker-compose.ymlfile; - what a

Dockerfileis; - how to declare and use volumes;

- how Docker Compose makes containers discoverable on an internal network;

- how to assign a domain name to our application;

- how to set environment variables;

- a bunch of useful commands.

That is an awful lot to digest. Congratulations if you made it this far, that must have been a real effort. The good news is that the next posts will be lighter, and the result of this one can already be used as a decent starting point for any web project.

Don't worry if you feel a little bit confused or overwhelmed, that is perfectly normal. Docker is a strong case for practice makes perfect: it is only by using it regularly that its concepts eventually click.

In the next part of this series, we will see how to choose and shrink the size of our images. Subscribe to email alerts below so you don't miss it, or follow me on Twitter where I will share my posts as soon as they are published.

You can also subscribe to the RSS or Atom feed, or follow me on Twitter.