Docker for local web development, part 8: scheduled tasks

Last updated: 2021-11-25 :: Published: 2020-06-08 :: [ history ]You can also subscribe to the RSS or Atom feed, or follow me on Twitter.

In this series

- Introduction: why should you care?

- Part 1: a basic LEMP stack

- Part 2: put your images on a diet

- Part 3: a three-tier architecture with frameworks

- Part 4: smoothing things out with Bash

- Part 5: HTTPS all the things

- Part 6: expose a local container to the Internet

- Part 7: using a multi-stage build to introduce a worker

- Part 8: scheduled tasks ⬅️ you are here

- Conclusion: where to go from here

In this post

Introduction

Once we start to get comfortable around Docker and make it a full component of our development environment, inevitably there will come a time when we have to deal with some form of task scheduling.

The first reflex in that case is usually to try and fit the familiar cron into the picture – and it's often surprisingly painful. In a best case scenario, we end up with a crontab that we import and install in the container via the corresponding image's Dockerfile (something like that); more often than not though, we end up with something much clumsier.

At the end of the previous part, we were left with the Laravel scheduler to run manually, which we expect to be handled as a cron entry. To address this within our setup, we could use an approach similar to the one described above – it would require a version of the image where the running process is the cron daemon instead of PHP-FPM, which could be achieved with a separate build stage. We would also need a service in docker-compose.yml, targeting the new stage in order to build and use the corresponding image.

This wouldn't be too bad, and to be honest it would probably be enough in most cases. But it is not scalable, nor is it the Docker way.

Imagine your application grows in complexity and you decide to add a microservice, maybe to break up a monolith, or to do something completely different using another language. Whatever this microservice does, imagine it needs to run its own tasks periodically. Following the same logic as above, you'd end up with two new services in docker-compose.yml: one to run the microservice in a regular way (e.g. as an API), and another one to manage the scheduled tasks with cron. Now imagine you need yet another microservice, also with its own cron jobs – that's another two new services to add to docker-compose.yml. Rinse and repeat – you see where this is going.

One of Docker's biggest strengths in my opinion is that it enables us to think at the system level rather than the application level – instead of considering each part of the system separately, we're invited to take a step back and consider it as a whole. As scheduling tasks is a common need for many parts of a system, there must be a way to manage them at the system level, in a unified way, instead of addressing the issue for each individual part separately.

The solution is to introduce an independent, system-wide scheduler.

The assumed starting point of this tutorial is where we left things at the end of the previous part, corresponding to the repository's part-7 branch.

If you prefer, you can also directly checkout the part-8 branch, which is the final result of this article.

Setting up the scheduler

There are multiple schedulers out there that we can use with Docker; they all more or less work in a similar way, but I settled on Ofelia for its simplicity. Ofelia is written in Go and allows us to quickly define tasks to be run periodically, targeting any of our setup's containers.

There are various ways to define scheduled tasks with Ofelia, mostly differing in where the tasks' configuration is located. I personally favour the approach involving a config.ini file, for it doesn't require updating the other services, ensuring minimum coupling and easy replacement if necessary (another configuration approach implies changing the targeted services' definition in docker-compose.yml, which I'm less happy with).

Let's see what this looks like in practice. Create a new scheduler folder under the .docker directory at the root, and add a config.ini file to it, with the following content:

1 2 3 4 | [job-exec "Laravel Scheduler"]

schedule = @every 1m

container = demo-backend-1

command = php /var/www/backend/artisan schedule:run

|

Here we've defined a single job – Laravel's schedule:run Artisan command – to be run every minute on the backend's container. job-exec indicates that we'll be using the already running backend container (as you may have guessed, there's also a job-run option to spin up a fresh container instead), whose name we assigned to the container key: demo-backend-1. This is Ofelia's only small defect in my opinion: it won't guess the container's name based on the service's, and requires us to specify the running container's expected name instead.

It's not really a big deal since we know the container's name is made of the project's name, the service's name and the container's number, but automatic name resolution would definitely be a plus. There are discussions about this, but it looks like it won't be implemented just yet.

In any case, what's interesting to observe here is that instead of being run from inside the container, the command ends up being exposed to and run from the outside. This is how scheduling tasks in Docker should be contemplated: as some sort of internally exposed API for command line operations, centrally managed by a scheduler.

We now need to define a service for that scheduler, to be added to docker-compose.yml:

1 2 3 4 5 6 7 8 | # Scheduler Service

scheduler:

image: mcuadros/ofelia:latest

volumes:

- /var/run/docker.sock:/var/run/docker.sock

- ./.docker/scheduler/config.ini:/etc/ofelia/config.ini

depends_on:

- backend

|

Mind the fact that the config.ini file is mounted onto the container, and that the backend container is required to be started first.

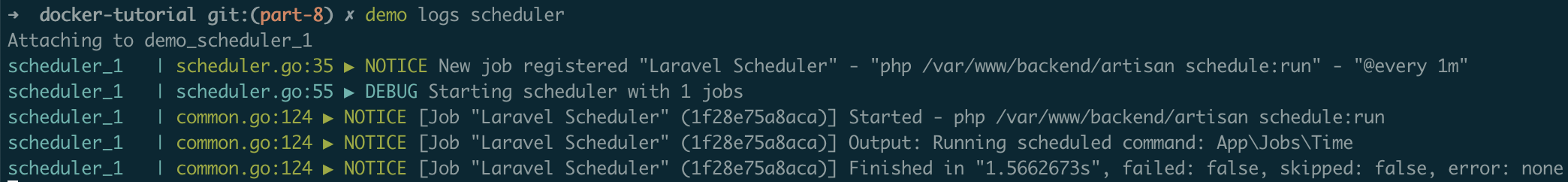

Believe it or not, we're pretty much done. Save the file and run demo start to download Ofelia's image and start the scheduler, before running demo logs scheduler to ensure it is functional:

You should get an output similar to the above after a minute or so, and see the current time being logged in the backend's storage/logs/laravel.log file, which is the result of the execution of the job we defined in the previous part.

That's it!

Using this approach, the configuration of scheduled tasks is completely decoupled from the services they target. No need to rebuild your services' images to change the cron entries – just update config.ini and restart Ofelia's container.

Conclusion

Like I said in the introduction, the regular cron approach would probably do the trick in most cases, and running a scheduler like Ofelia instead might feel a bit like overkill. But the effort to implement the former is arguably bigger than the latter, and if you have the possibility to go for a simpler, scalable way from the get go, why would you not?

Moreover, using a scheduler to run tasks across systems isn't only convenient locally – that's actually how it works in most production environments too (see for example AWS's Scheduled Tasks, or Kubernetes' CronJob).

This is the thing with Docker: it's different. If you haven't really dealt with DevOps stuff before, there is still a lot to learn, but that's all you need to do – learn. On the other hand, people who are used to dealing with VMs (locally or not) are likely to have a tougher time, because they need to unlearn part of what they know first. They will initially try to provision a container like a VM, or try to configure cron jobs like they used to – they will try to force square pegs into round holes, simply because they're used to square holes.

I went through this as well.

Docker is a paradigm shift, and the sooner we accept this – the sooner we let go of our old ways and embrace the Docker way – the easier it gets. Don't get me wrong though: there is substantial benefit to this trade, for enabling system-level thinking unlocks a whole new world of virtually endless possibilities.

Today's article is the last of the series, but I cannot wrap this up without a proper conclusion, which will be the next and final instalment. Subscribe to email alerts below so you don't miss it, or follow me on Twitter where I will share my posts as soon as they are published.

You can also subscribe to the RSS or Atom feed, or follow me on Twitter.